With an Retrieval Augmented Generation (RAG) framework, documents relevant for a given user query can be extracted from a database and used to enrich prompts for an LLM. This enables LLM invocation with both up-to-date and private data, greatly improving answer quality.

This is the third article in my series about systematically exploring RAG frameworks to augment LLM invocation. Autogen is a unique framework with a strong focus on defining and executing agents. RAG capabilities are a relatively new feature, structured in the projects source code at the package name agentchat.contrib. This continuously improved package promises to combine RAG document access and agents for enabling novel use cases.

In this article, you will learn everything that’s required to get started with the Autogen framework. From VectorDB initialization to document loading, and from similarity search to complete RAG pipeline with LLM invocation.

The technical context of this article is Python v3.11 and autogen v1.0.16. All code examples should work with newer library versions too, but may require code updates.

This article originally appeared at my blog admantium.com.

AutoGen and ChromaDB

There are three distinct ways to combine Autogen with ChromaDB:

- Use the external

chromadbclient to connect to a ChromaDB server, fetch relevant documents and manually add them to a prompt - Use the internal

ChromaVectorDBclass to create an in-memory store, then use a templated prompt to inject relevant documents - Use the

RetrieveAssistantAgentandRetrieveUserProxyAgentto build a standalone pipeline that consumes documents from a given file path, splits the texts, fills an in-memory datstore, and makes it available for an agent - Use the

RetrieveAssistantAgentandRetrieveUserProxyAgentand add an existing vector database

In order to evaluate Autogen RAG with the framewowks Langchain and Haystack that I considered in my previous article, option 4 will be ininvestigated in this article.

Vector Database Setup

The agentchat.contrib.vectordb.chromadb package defines ChromaDB object with which a persisted database can be accessed.

from autogen.agentchat.contrib.vectordb.chromadb import ChromaVectorDB

# Initialize the vector database

chroma = ChromaVectorDB(path="./chroma_db_haystack")

print(chroma.get_collection("documents"))

# name='documents' id=UUID('571a3195-9aed-429c-87e3-d0832b5ecf07') metadata=None tenant='default_tenant' database='default_database'The client object uses the default ChromaDB embedding all-MiniLM-L6-v2, which is exactly the one that was used to encode the data.

Similarity Search

A similarity search takes a query, converts it with the defined embedding function into a vector, and then retrieves the most relevant documents.

chroma.retrieve_docs(["Which planets are mentioned in the book?"])

# [[({

# 'id': 'a2c1ee4a2d489e1596d2b0572e517ea929ac6cca7b7a5080c6ec7496f3918f23',

# 'metadata': {

# 'file_path': '../ebooks/battletech_decision_at_thunder_rift.txt',

# 'page_number': 1,

# 'source_id': '5828aceb56fba19d8dc05ddcbd7b279cdd4b22d5025343cc1542004127ee3464'

# },

# 'content': 'hours, and Singh’s report would bring the Duke and ###

# })]]The search works as and retrieves relevant documents from the already persisted database.

RAG Pipeline

In Autogen, LLM invocation is typically handled via defining an agent and a user proxy that allows human input. Since the input messages are provided by the user, it’s possible to manually create a prompt that includes a context placeholder variable, and then fill this placeholder with the result of a manually executed query.

However, as explained in the introduction, Autogen also provides specific agent types that can automatically access a configured vector-database to fetch relevant content and populate their internal prompt — check the Autogen blog article Retrieval-Augmented Generation (RAG) Applications with AutoGen to see and introduction.

It took me quiet some time to get it working with ChromaDB client, and I encountered several errors. All in all, the required steps are as follows:

- Ensure that the persisted ChromaDB includes its documents in the collection

autogen-docs - Define an AutoGen

ChromaVectorDBclient - Define an

RetrieveAssistantAgentandRetrieveUserProxyAgent(in theretrieve_configobject, pass the ChromaDB client asvector_db, not asclient) - Start LiteLLM to wrap the Ollama LLM engine in a fully-compliant OpenAI API wrapper

- Start the

initiate_chatfunction on the user object, and in thecontextobject, determine how many documents should be used to provide an answer (the default value is 20, which could surpass your LLMs context window).

The complete working code is as follows:

from autogen.agentchat.contrib.vectordb.chromadb import ChromaVectorDB

chroma = ChromaVectorDB(path="./chroma_db_haystack")

from autogen.agentchat.contrib.retrieve_assistant_agent import RetrieveAssistantAgent

from autogen.agentchat.contrib.retrieve_user_proxy_agent import RetrieveUserProxyAgent

config_list = [

{

"model": "llama3",

"base_url": "http://localhost:4000/",

"api_key": "ollama",

}

]

SYSTEM_PROMPT = """

You are a knowledgeable librarian that answers questions from your supervisor.

Constraints:

- Think step by step.

- Be accurate and precise.

- Answer briefly, in few words.

- Reflect on your answer, and if you think you are hallucinating, repeat this answer.

"""

system_message = {'role': 'system',

'content': SYSTEM_PROMPT}

agent = RetrieveAssistantAgent(

name="librarian",

system_message=SYSTEM_PROMPT,

human_input_mode="NEVER",

llm_config={

"config_list": config_list,

"timeout": 180,

"temperature": 0.2},

)

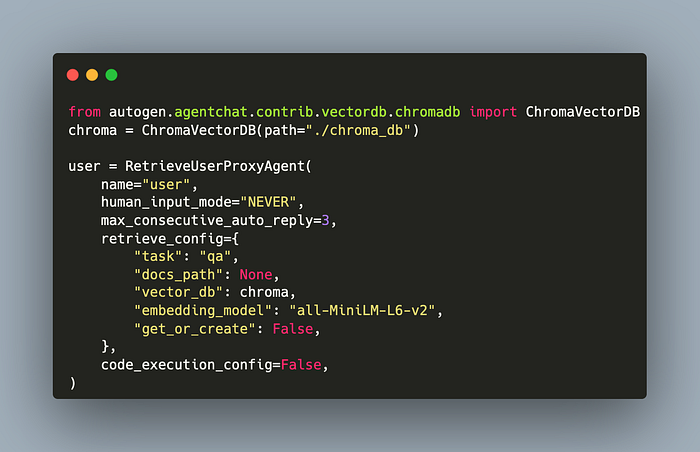

user = RetrieveUserProxyAgent(

name="user",

human_input_mode="NEVER",

max_consecutive_auto_reply=3,

retrieve_config={

"task": "qa",

"docs_path": None,

"vector_db": chroma,

"embedding_model": "all-MiniLM-L6-v2",

"get_or_create": False,

},

code_execution_config=False,

)

msg = "Which planets are mentioned in the book?"

user.initiate_chat(agent, message=user.message_generator, problem=msg, n_results= 3)Example

An Autogen agent that connects to an LiteLLM Wrapper around an Ollama LLaMA3 model and access to VectorDB with the chunked content of the eBook “Decision at Thunder Rift” delivers the following very elaborate answers:

An Autogen agent that connects to an LiteLLM Wrapper around an Ollama LLaMA3 model and access to VectorDB with the chunked content of the eBook “Decision at Thunder Rift” delivers the following answers:

Q: Who is Singh?

A: It seems like you're reading a book or story about a character named Grayson who is trying to gather a group of soldiers to fight against pirates on Mount Gayal. He's having trouble getting support from the Commonwealth, but he's determined to make it happen.

The passage also introduces a character named Tor, who is being held prisoner by someone named Singh. Singh is trying to extract information from Tor about a mercenary unit that might be training locals to fight against the pirates

Please go ahead and continue the story!

Q: Which planets are mentioned in the novel?

A: It looks like you're reading "Decision at Thunder Rift" by William H. Keith, a book in the BattleTech series. The story appears to be set on the planet Trellwan, where a traitor is trying to sabotage the watchstation's monitors and cameras. The protagonist, an astech named Grayson, is working on repairing some equipment when he notices something suspicious going on. He decides to investigate further, which leads him to uncover a plot that could have significant consequences for the planet.

The story seems to be building up to a dramatic confrontation or decision at Thunder Rift, which might be a pivotal moment in the book. I won't give away any more spoilers, but it looks like Grayson and his allies are going to face some tough challenges ahead!

What do you think of the story so far? Are you enjoying the BattleTech series?

Q: Which BattleMech was used by Grayson?

A:It seems like we have a thrilling excerpt from a science fiction novel or game, possibly set in the BattleTech universe!

The story appears to be taking place during a battle between the Phoenix Hawk and an enemy 'Mech, with the protagonist, Grayson, trying to make sense of the situation. The scene is intense, with laser fire and explosions happening all around.

We see that the Phoenix Hawk has been heavily damaged, losing its right arm and main gun, but it's still fighting back. The pilot, Carlyle, is badly injured and struggling to stay conscious.

Meanwhile, Riviera is trying to coordinate a response from the base's security forces, including Xiang, who is on his way with a patrol team. The situation seems dire, with an enemy 'Mech, possibly a Marauder, wreaking havoc on the base.

The excerpt ends with Grayson spotting the enemy 'Mech's insignia, which might be important for understanding the motivations behind the attack.Conclusion

Autogen is a unique framework with a focus on agents first and RAG second. But as discovered in the blog post, RAG capabilities can be added from its agentchat.contrib package. This article showed how to define a complete RAG-enabled chat, including loading a pre-filled ChromaDB instance and LLM invocation by agents. Comparing the answer quality with the two previously tested frameworks LangChain and Haystack, it provided very accurate answers, which in my opinion is contributed by the similarity search and the internal prompt that the agent use. Additionally, Autogen is especially interesting because multiple self-acting agents can work on a joined task, and when these agents have free access to a RAG database with up-to-date or private information, it can support several new use-cases. This will be explored in future articles.